Welcome!

Homepage & blog of Dr Caterina Constantinescu | Data professional. Psychologist.

I’d long been curious about web scraping and am pleased to have finally made a start in this direction. Previously, any scraping job I needed was carried out via import.io, but now I’ve branched out to Scrapy. I’d also wanted to practise my use of Python, so this was a great opportunity to kill two birds with one stone. Here I’ll share my first foray into this area - it may be useful for others who are also starting out (as well as for my future self, as a reminder).

If you attended my talk on “Generalised Additive Models applied to tourism data” at the Newcastle Upon Tyne Data Science Meetup in May 2019, please find my (more detailed) slides linked below.

Some context I’d been curious about generalised additive (mixed) models for some time, and the opportunity to learn more about them finally presented itself when a new project came my way, as part of my work at The Data Lab.

I’ve recently presented a toy Shiny app at the Edinburgh Data Visualization Meetup to demonstrate how Shiny can be used to explore data interactively.

In my code-assisted walkthrough, I began by discussing the data used: a set of records detailing customer purchases made on Black Friday (i.e., each customer was given a unique ID, which was repeated in long format in the case of multiple purchases). Both the customers and the items purchased are described along various dimensions (e.

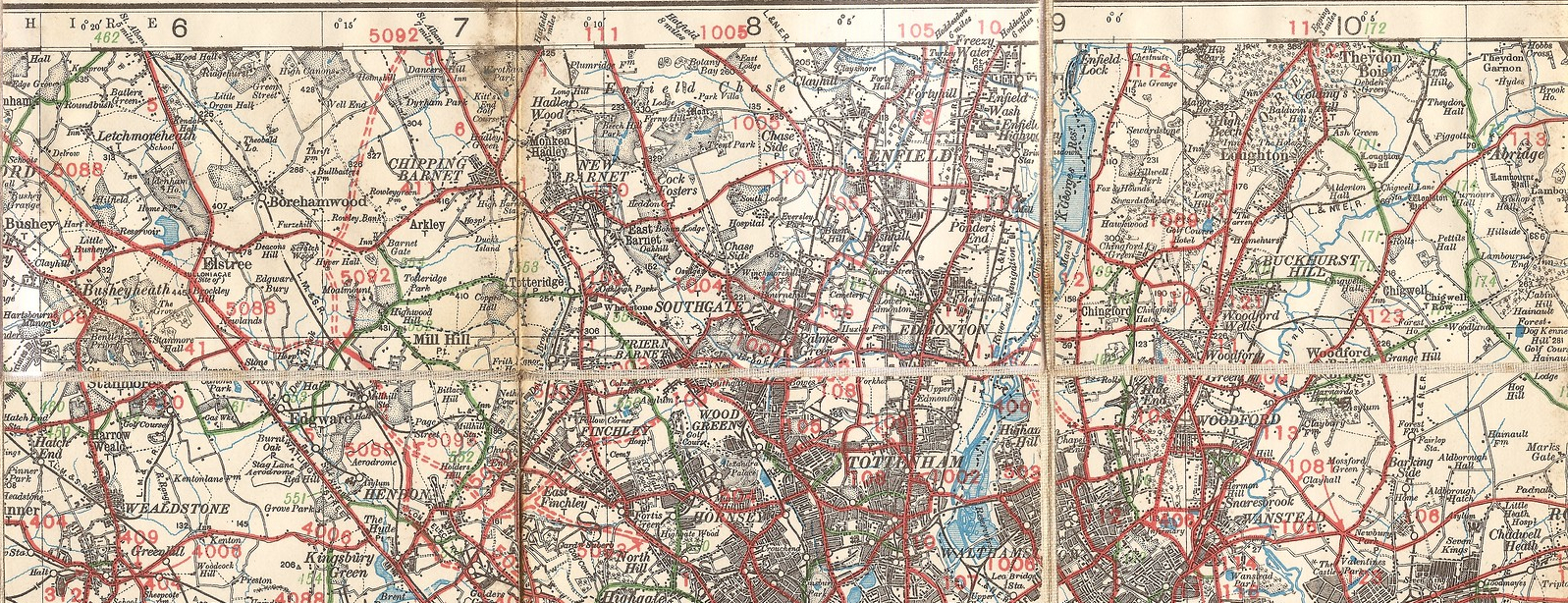

Project aims As part of The Data Lab, I worked on a project for visualising the traffic flow within a subsidised transport service, operated by a Scottish council. This visualisation needed to display variations in traffic flow conditional on factors such as the time of day, day of the week, journey purpose, as well as other criteria. The overall aim here was to explore and identify areas of particular activity, as well as provide some insight into how this transport service might be improved.

The Accelerator programme The Accelerator programme run by The Data Lab between 19 April 2018 - 06 September 2018 was a Scottish Government collaborative project, open to employees of the Scottish Government, the Information Services Division, the National Records of Scotland and Registers of Scotland. Employees applying to take part had a background in statistics, economics, operational research and social research, and sought to improve their data skills across a variety of areas.

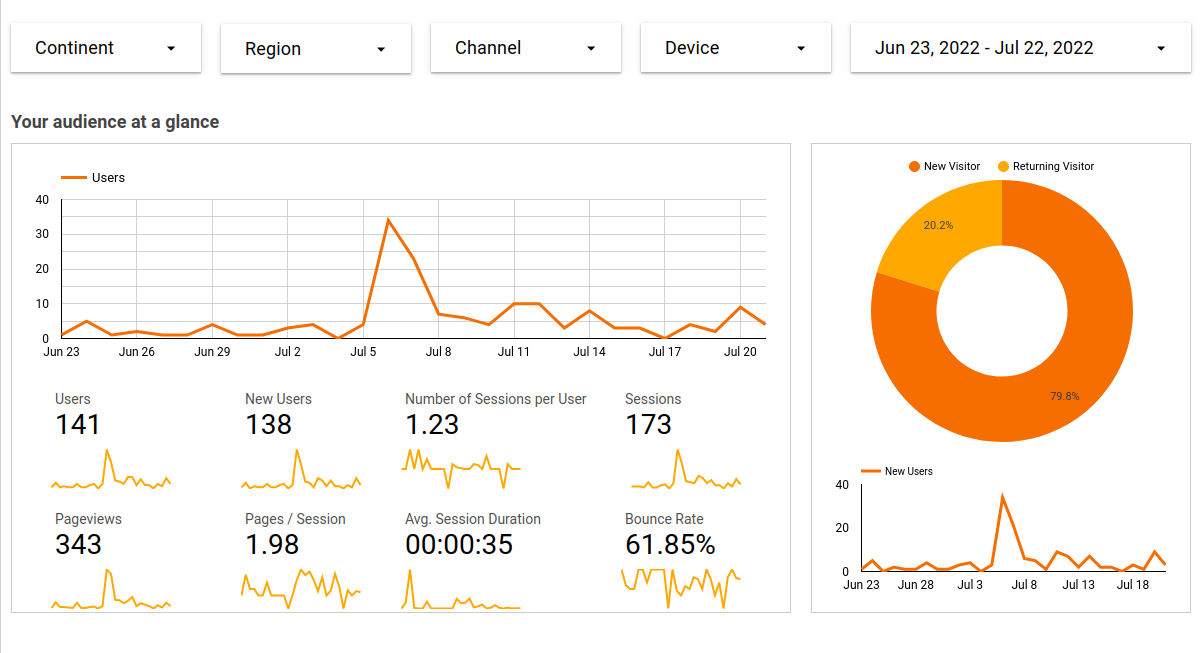

If you’ve wondered how page views may vary in response to website changes, you’ve come to the right place. Setting up your website around a GitHub repo (see options: Netlify + Hugo, and GitHub Pages + Jekyll) is a great way to ensure that this is a smooth process. The beauty of relying on GitHub to store your site is that you are creating an effortless log of site changes as you go, without having to devote attention to this as a separate process.

DataPowered

DataPowered